To achieve Agility in manufacturing – embrace Variability – Part 1

Posted 1773 days ago

This isn’t a comparison between Apache Spark vs. Dask. But if you are interested in that, Dask is humble enough to include that in their official documentation. This blog is about my experience of using both of these frameworks to build applications.

At Quartic we have built multiple jobs using Apache Spark that performs ingress/egress, ETL, score models, etc on different kinds of data which are of both streaming and batch nature.

Spark’s rich set of APIs would make your job easy if you have everything as data frames and RDDs spread across multiple nodes in the cluster. But the problem lies in building the required data frames just so we can make use of the APIs provided by Spark.

We have data streaming at high frequency from multiple IoT sensors. Now imagine building a pipeline to serve thousands of machine learning models on top of this data using Apache Spark.

Here is a nice blog by Conor Murphy explaining training and deploying multiple models at scale. This is great, but in practice, we don’t just deploy one model per device. There can be multiple models per sensor, and there are also instances where predictions are used as features in other models.

But to generate streaming datasets for such use cases without introducing skewness to the pipelines requires careful selection of the partitioning strategy (whether to use device-id, model-id, or something else as the partition key to Kafka topics).

After enduring all the trouble of building pipelines that perform cleanups, ETLs, and denormalization on streaming data we realized that we couldn’t scale beyond a limit.

We either scale the cluster or increase the micro-batch intervals of the jobs to more than 30 seconds. And the max number of models that we could deploy in our cluster was also surprisingly small – 600 models on 24 cores with 24 Kafka partitions.

Our problem does not only deals with ML models, but it also has custom code being written by our users that has to be executed on streaming data, which may be used as features in multiple ML models. So our challenge was to find implementations that also resolved such dependencies.

This is not a straightforward task in Spark. We had to create different pipelines and loopbacks to accomplish this, not a very efficient solution if you’re already short on resources.

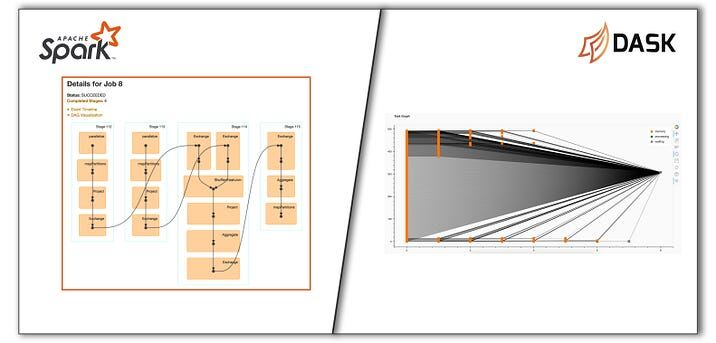

It became clear to us that if we can build a DAG ourselves, there is scope of avoiding some redundant work. Spark builds DAGs before executing jobs, but we couldn’t find any high-level APIs that we could use. We had used a small Python library called Graphkit to build an application previously.

So we turned to it again keeping aside the fact that Python’s GIL will not allow nodes to execute in parallel. And Graphkit turned out to be the solution for our problem of resolving dependencies in order.

It works well for small applications and toy projects/ POCs, but scaling is a challenge.

During one of our brainstorming sessions, Dinesh(my teammate) recommended Dask. I always thought it was for processing pandas dataframes at scale which is something that Spark is already good at. But I never knew of their other APIs.

And Dask’s Delayed API is exactly what we needed! Not only did it allow us to build the required DAG using low-level APIs, but it also allowed graphs to be executed in a distributed fashion at scale.

The API was powerful and we were able to quickly build a solution by clubbing Graphkit and Dask to serve thousands of models on the same 24 cores while also resolving the dependencies dynamically. It was a delight to see thousands of nodes being processed in seconds.

Startups often find themselves in situations where they don’t have much time for R&D. They have to use the tools they know will work in order to meet their delivery deadlines. Though this involves implementing workarounds, the solutions may not be the best.

For us at Quartic, we are always on the lookout for solutions to improve our platform; and we’re happy with how Dask has worked out for us.